Standard Ingest Procedure

Introduction

Use the Standard Ingest Service to ingest images and records into the FamilySearch Infinity System by uploading them and their associated metadata into a Transfer S3 Bucket managed by FamilySearch.

Access to the bucket is controlled by an AWS IAM Account. The credentials for the IAM account are provided to you by FamilySearch. Each account restricts access to objects named with a specific prefix assigned to that account so providers can only access their files and information in the Transfer S3 Bucket.

Here’s how it works:

- Obtain your AWS bucket account name, login credentials, and project ID from FamilySearch.

- Organize the files you want to ingest in a folder on your computer and create any new files you will need for the metadata.

- Upload your files to S3, making sure to follow the naming conventions.

- Use one of the FamilySearch status dashboards or the Status Service API to monitor your ingest. See the Monitoring Ingest Status section below for details.

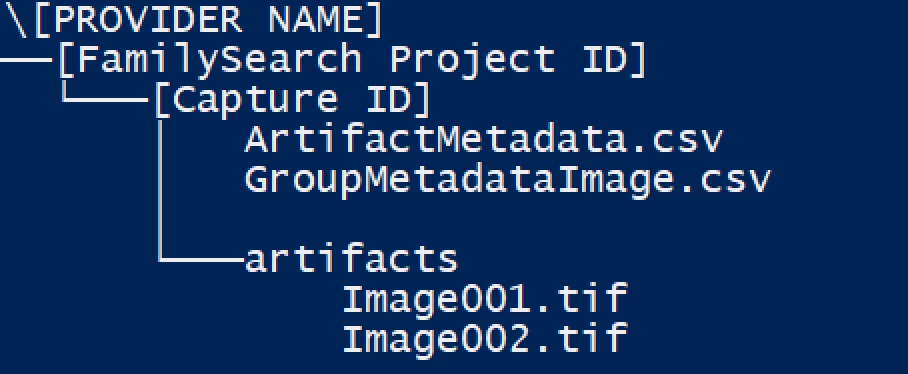

The ingest files are organized by file and directory name. Make sure you follow the specified path and naming conventions provided below so that the files can be recognized and ingested.

- If you use a third party S3 access tool like Cloudberry to upload your files, you can stage the entire directory structure on your workstation before uploading them to S3.

- During the upload process, Cloudberry will name the uploaded files with key names, matching the full path name of the files on your workstation.

- If you write your own upload process, your system can rename the file names according to the naming conventions during the upload process.

Directory layout requirements for the S3 bucket

Base Subdirectory

Images and metadata files are uploaded by Groups (sometimes called Folders). The naming of objects, including their paths and file names, is important. We use the following pattern to create the base subdirectory for all image, record, and metadata files uploaded for a specific group. Note that image and record files are stored in an "artifacts" subdirectory located within the base directory.

The base subdirectory is composed of the following components:

/[Provider Name]/[FamilySearch project id]/[Capture Id]/

- Provider Name: This is the root directory under which all information will be uploaded for a specific provider. This name is also your IAM account name, and must be setup by FamilySearch before data uploading can begin.

- FamilySearch project id: The FamilySearch project id defined for this project.

- Capture Id: This is a globally unique Capture Id created by the provider specifically for this group of images.

Metadata can be provided for groups to be ingested using one of two different formats, METS or CSV. Based on the format chosen the metadata files in the base directory will differ.

CSV formatted metadata files

When using a CSV format to specify metadata you need to provide two different metadata files to contain the necessary information. One file contains the group metadata and other contains the artifact metadata. There are two possible types of Group Metadata files depending on the type of artifacts being ingested and one type of Artifact Metadata file.

- GroupMetadataImage.csv: use to specify group metadata for image ingests

- GroupMetadataRecord.csv: use to specify group metadata for record ingests

- ArtifactMetadata.csv: used to specify artifact data for either image or record type of ingests.

Example image file names and location in S3:

- /[Provider Name]/[FamilySearch project id]/[Capture group id]/GroupMetadataImage.csv

- /[Provider Name]/[FamilySearch project id]/[Capture group id]/ArtifactMetadata.csv

Note: The CSV files must use a comma as the field separator. When a field value contains a comma or quote ("), the entire field must be enclosed in quotes, and any quotes must be "escaped" by using an extra quote ("").

For example, if you want to include this string:

Fred "Bud" Jones, Jr.

In the CSV file it would appear as:

"Fred ""Bud"" Jones, Jr."

Details of the CSV metadata files are included below.

Image and Record files

Image and record files are written to an "artifacts" subdirectory located in the group base subdirectory as follows:

/[Provider Name]/[FamilySearch project id]/[Capture group id]/artifacts/

Example image file names and location in S3:

- /[Provider Name]/[FamilySearch project id]/[Capture group id]/artifacts/Image001.tiff

- /[Provider Name]/[FamilySearch project id]/[Capture group id]/artifacts/Image002.tiff

The directory layout should look like the following image:

Group CSV metadata file specifications

Optional fields can be left blank

The CSV file must have a header to indicate column order and contents. The header must use the exact names from the table below. Columns for optional fields can be omitted from the file.

GroupMetadataImage.csv

| Field / Header Title | Sample | Description | Requirements | Required | METS Key |

|---|---|---|---|---|---|

Rework | TRUE | Indicates that this is rework of previously submitted material. | TRUE or FALSE | Yes | COM_REWORK |

Title | Michigan Births 1850-1875 | The title of the book/folder (GRMS listing title if no title is available) | Yes | MODS_TITLE | |

Place | Wayne, Michigan, United States | The location(s) of the events recorded on the image (or other artifact). Multiple values allowed separated by bar | Yes | MODS_PLACE_TERM | |

Start Date | 1850 | The start date of the events on the image (or other artifact) | Yes | MODS_CREATED_START_DATE | |

End Date | 1875 | The end date of the events on the image (or other artifact) | Yes | MODS_CREATED_END_DATE | |

Record Type | Birth certificate | The record type(s) of the image (or other artifact). Multiple values allowed separated by bar. Can be an official record type string, or the concept ID, which is preferred. | Yes | COM_RECORD_TYPE | |

Language | English | The language(s) used on the image (or other artifact). Multiple values allowed separated by bar | Yes | MODS_LANGUAGE_TERM | |

Record Custodian | Michigan State Archive | The organization/entity that has custody of the physical artifact | Yes | METS_HDR_AGENT_CUSTODIAN | |

Archival Reference Number | 45-3453-345 | The ID used by record custodian (required if there is an ID available – needed for delivery) | Yes | ||

Capture ID | 999-999-99999 | The ID used by the capturing organization (used for rework). | The Capture ID must be unique for each group ingested for a provider. | Yes | COM_GROUP_CAPTURE_ID |

Total Artifacts | 1078 | The number of images (or other artifacts) in the group | Integer number | Yes | COM_TOTAL_ARTIFACTS |

Capture Operator Name | SmithJane | Username of vendor associated with the Project ID | Must be a valid FamilySearch Username | Yes | COM_OPERATOR_NAME |

Capture Operator ID | cis.user.MMM9-TGFQ | CIS ID associated with the Username that is assigned to the Project ID (this should match the username provided in the Operator Name field) | Must be a valid FamilySearch account ID (CIS ID) or be left blank. Max Length: 18 Characters (CIS ID on backend) | Yes | COM_OPERATOR_NUMBER |

Volume | 3 | The identifier for a volume of a series of books/folders all under the same title (optional) | No | ||

Capture Date | 2019-02-13 | The date this group was captured (optional) | No | MODS_CAPTURED_DATE | |

Digitizing Entity | FamilySearch | The name of the entity that did the digital creation/capture of the group of artifacts (optional) | No | METS_HDR_AGENT_CREATOR | |

Artifact Type | NBX | The type of artifacts in this group. | Match values in ArtifactMetadata.csv "Artifact Type" field. | No |

Currently only one group per metadata file is supported. As a result, the group metadata file only contains two rows: a header row and one data row.

Ensure that the CSV files is UTF-8 Comma Delimited. Fields values that have a comma have the whole value surrounded by double quotes. If you review your CSV file in Notepad or Textedit, you should see something similar like this image:

Artifact CSV Metadata File Specifications

The CSV file must have a header to indicate column order and contents. The header must use the exact names from the table below. Columns for optional fields can be omitted from the file.

Please Note: The order of the entries in this file will be maintained when the images are published, so ensure that you have each row in the order you want it to show up (i.e. Book Covers, title page, page 1, page 2)

ArtifactMetadata.csv (one row for each artifact)

| Field / Header Title | Sample | Description | Requirements | Required | METS Key |

|---|---|---|---|---|---|

| Filename | Image0004.jpg | The filename for the artifact. Note: the extension must match the actual image file type. | Yes | mets:FLocat-href | |

| File Size | 3765432 | Size of the artifact file in bytes | Integer number | Yes | mets:file-SIZE |

| Artifact Type | Image | The type of artifact (Image, Audio, Text, ...) (optional - default to image) | Yes | mets:file-MIMETYPE | |

| Capture ID | 5b17447b-e972-403c-987f-ee4346784839 | The ID used by the capturing organization (used for rework). | Each artifact included in the csv file must have its own unique Capture ID | Yes | fscommon:artifactCaptureId |

| Hash Algorithm | MD5 | The hash algorithm used for computing the checksum. | Yes | mix:messageDigestAlgorithm | |

| Hash | d4f7beaa9828bb62b58f9497dc3778cc | The actual hash value | Must be a valid MD5 hash for the file | Yes | mets:file-CHECKSUM, mix:messageDigest |

| Image Width | 5192 | The width of the image in pixels | Integer number | No | mix:imageWidth |

| Image Height | 2834 | The height of the image in pixels | Integer number | No | mix:imageHeight |

We recommend checking if your MD5 hash is correct on 1 or 2 images. You can compare you hash with this free online MD5 hash generator: https://emn178.github.io/online-tools/md5_checksum.html (Note: we aren't sponsored or affiliated with this website).

Image Requirements

TIFF Images

Tiff images must be 1 channel 8-bit greyscale images, or 3 channel 24-bit color or greyscale images.

Record CSV Metadata File Specifications

The CSV file must have a header to indicate column order and contents. The header must use the exact names from the table below.

GroupMetadataRecord.csv

| Field | Sample | Description | METS Key |

|---|---|---|---|

| Template | UUID | The ID of the template being used for records ingest (records) | fscommon:template |

| Flat File Flavor | Ancestry | The identifier of the template mapping standard (records) | fscommon:flavor |

| Data Format | flat-file | The type of record being ingested, i.e. flat-file, gedcom, or gedcomx | fscommon:dataFormat |

| Rework | TRUE | Indicates that this is rework of previously submitted material | COM_REWORK |

Currently only one group per file is supported, so the group metadata file only contains two rows. A header row and a data row.

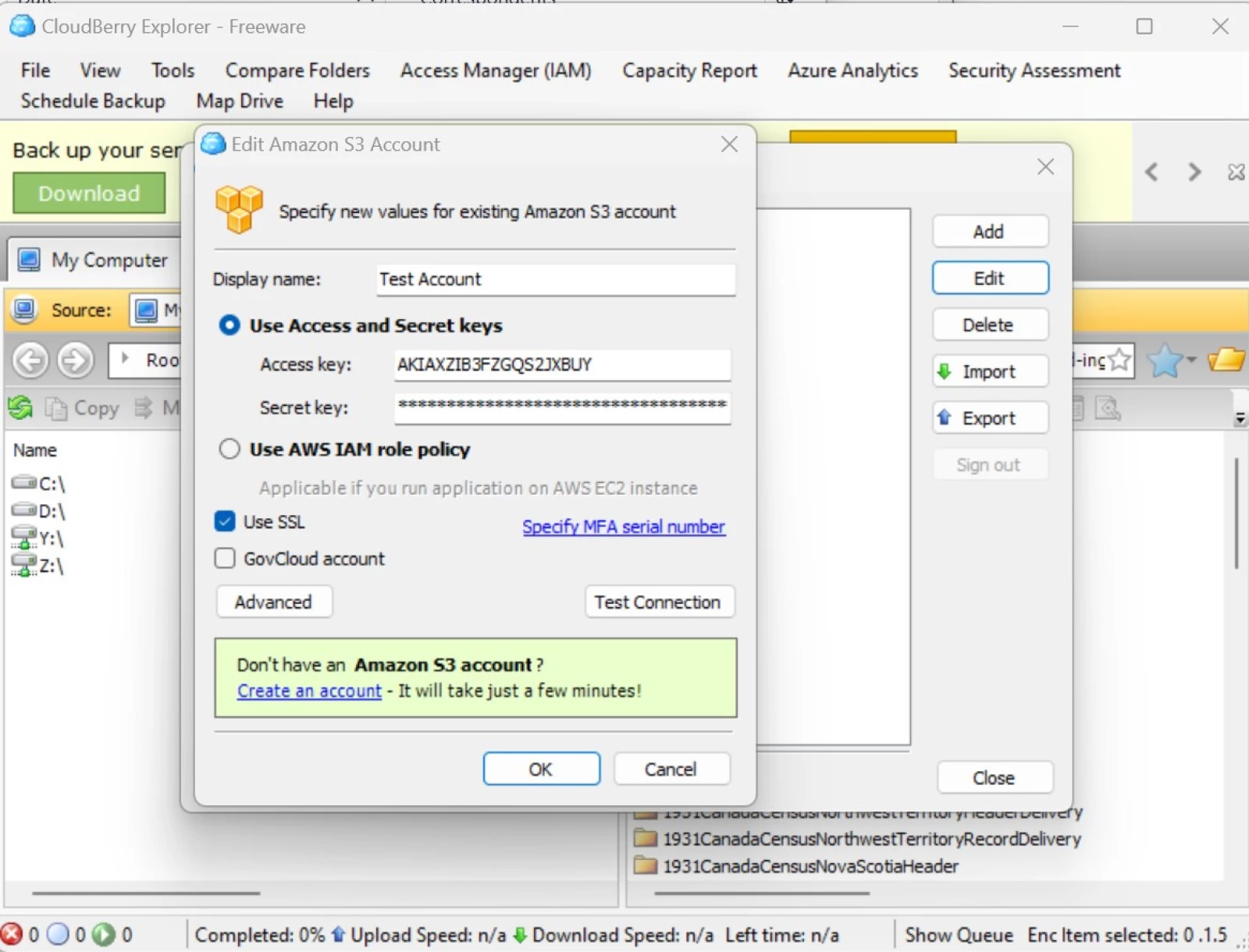

Uploading objects

Login credentials limit access to a root directory (S3 prefix) in the S3 Transfer Bucket. Bucket Name, Account Name, Access Key and Secret Key will be provided by FamilySearch. For security reasons, Access Key and Secret Key will be changed periodically.

FamilySearch has separate S3 buckets for our Development, Test, and Production environments. All three bucket names will be provided to you, and access to all three is allowed with your Account. The Development and Test environments may be used for testing, however any tests must be coordinated with FamilySearch to ensure a project is set up to receive your ingest. If a receiving project is not set up in advance the ingest will fail.

The group metadata file (GroupMetadataImage.csv or GroupMetadataRecord.csv) serves as the completion trigger and should be the last file uploaded for a specific group.

Multiple options are available for uploading the objects into S3 from your computer. For example:

- A third party application such as Cloudberry can be used.

- Amazon provides an SDK with API's that allow you to write files to S3

- Standard HTML POST calls can be made to upload files.

Cloudberry

Cloudberry provides a client application for MS Windows that enables easy file uploads to an S3 bucket that can be used to copy files from your computer to the FamilySearch S3 Transfer Bucket. It offers both a free and a paid version. The main difference lies in their upload capabilities:

- The free version uploads one image at a time.

- The paid version utilizes multiple threads and simultaneous uploads of multiple files.

Depending on your internet speed, this difference can have a big impact on how long it takes to upload a large number of files.

To get started with Cloudberry:

- Download and install the application on your computer.

- Register your Amazon S3 account within the Cloudberry application using the account information provided by FamilySearch.

- To do so, open Cloudberry and navigate to "File > Amazon S3."

- Enter the Access key and Secret Key provided, and click "Test Connection" to verify, then OK to save.

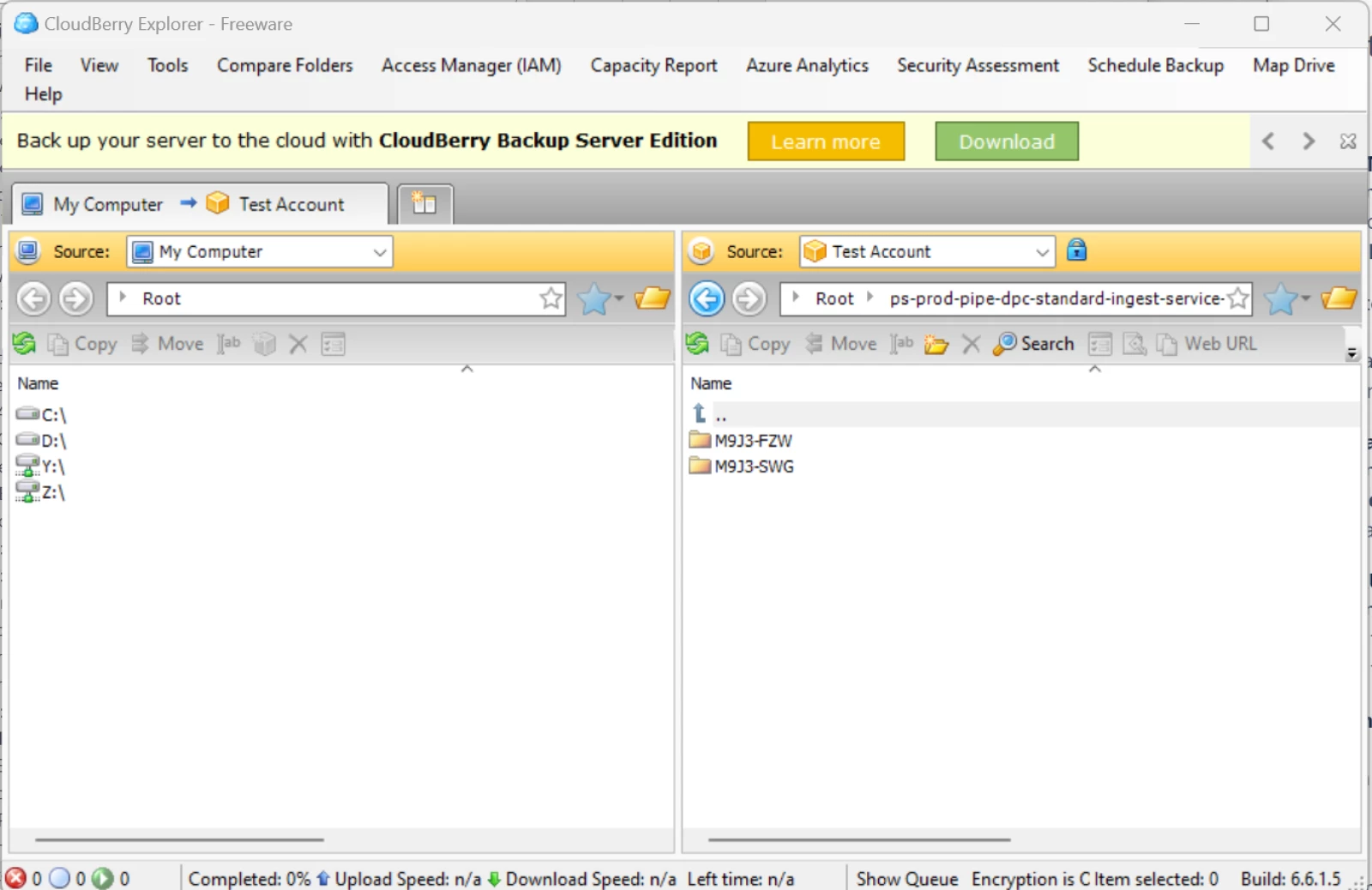

Once you have added the Amazon S2 account you can select it from the "Source" dropdown shown on the right half of the screen.

Next, enter the S3 bucket name and account name in the folder field just below the Source dropdown.

- Use the same naming format that you would for a folder: bucketName/accountName. FamilySearch will provide you with this information.

After completing these steps, you'll see the contents of the root S3 directory for your account on the right side of the screen. Note that because you entered your account name as part of the folder string, the initial page will display files and directories in your bucket/accountName/ directory.

Due to account restrictions, you won't be able to view anything higher than this directory. Be aware that when following this procedure with Cloudberry or a similar tool, you don't need to add your accountName to the path of copied files since that is the current directory displayed on the right side.

- The accountName root is automatically included. Project ID directories should be copied to this root directory.

On the left side of the screen, you can browse folders on your computer.

To ingest, navigate to the appropriate folder location on both screens and copy the files and/or directories to be ingested into the destination folder by selecting, dragging, and dropping them.

- If you have multiple groups under a Project Name, you can copy at the Project Name level by dragging the project folder from your computer to the root directory in the S3 view.

- If you've already set up the project directory on S3, you can copy the group level (Capture Group ID) directories into the project directory.

AWS API's for programmatic uploading to the S3 bucket

Instructions for using the AWS SDK to access S3 can be found here.

Monitoring Ingest Status

Use one of the FamilySearch status dashboards or the Status Service API to monitor ingest progress and identify errors. Contact FamilySearch for dashboard access.

Status Service API: PUT /status/groupStatus/{projectId}

Documentation: pipe-dpc-ingest-status: StatusController

Updated 2 days ago